Homoscedasticity in ML Homoscedasticity & Heteroscedasticity

Contents

- Detection of Hetroscedasticity through Formal tests

- What Is Homoscedasticity & Heteroscedasticity?

- Assumptions of Simple Linear Regression: What will be the effect of the error terms not being homoscedastic in nature?

- Little or No autocorrelation

- Advance your career in the field of marketing with Industry relevant free courses

It also depends on the domain you’re working in and varies from domain to domain. In other words, when the smallest and the largest values in a variable are too extreme. In other words, Linear Regression assumes that for all the instances, the error terms will be the same and of very little variance. Heteroscedasticity is a hard word to pronounce, but it doesn’t need to be a difficult concept to understand.

Let’s move on to estimating the correlation coefficient in a mathematical manner. One informal way of detecting heteroskedasticity is by creating a residual plot where you plot the least squares residuals against the explanatory variable or ˆy if it’s a multiple regression. If there is an evident pattern in the plot, then heteroskedasticity is present. A regular likelihood plot or a traditional quantile plot can be utilized to verify if the error phrases are usually distributed or not. A bow-formed deviated pattern in these plots reveals that the errors aren’t normally distributed.

The Breusch-Pagan test helps to check the null hypothesis versus the alternative hypothesis. A null hypothesis is where the error variances are all equal , whereas the alternative hypothesis states that the error variances are a multiplicative function of one or more variables . If heteroscedasticity is present in the data, the variance differs across the values of the explanatory variables and violates the assumption. It is therefore imperative to test for heteroscedasticity and apply corrective measures if it is present.

Increased normal errors in turn implies that coefficients for some independent variables may be discovered to not be significantly different from zero. In other words, by overinflating the standard errors, multicollinearity makes some variables statistically insignificant when they should be important. No or low Multicollinearity is the fifth assumption in assumptions of linear regression. It refers to a situation where a number of independent variables in a multiple regression model are closely correlated to one another. Multicollinearity generally occurs when there are high correlations between two or more predictor variables.

The Goldfield-Quandt Test is useful for deciding heteroscedasticity. Similarly, there could be students with lesser scores in spite of sleeping for lesser time. The point is that there is a relationship but not a multicollinear one. The students reported their activities like studying, sleeping, and engaging in social media. Similarly, extended hours of study affects the time you engage in social media. Yes, one can say that putting in more hours of study does not necessarily guarantee higher marks, but the relationship is still a linear one.

If the Standard errors are biased, it will mean that the tests are incorrect and the regression coefficient estimates will be incorrect. Heteroscedasticity is also likely to produce p-values smaller than the actual values. This is due to the fact that the variance of coefficient estimates has increased but the standard OLS model did not detect it. Therefore the OLS model calculates p-values using an underestimated variance. This can lead us to incorrectly make a conclusion that the regression coefficients are significant when they are actually not significant.

Detection of Hetroscedasticity through Formal tests

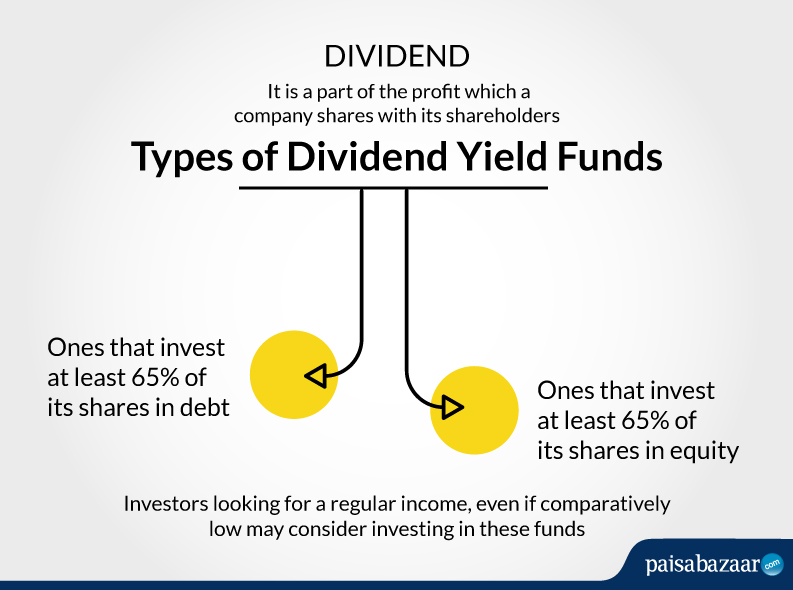

One of the assumptions of the classical linear regression mannequin is that there isn’t a heteroscedasticity. Specifically, heteroscedasticity is a systematic change in the spread of the residuals over the range of measured values. Heteroscedasticity is a problem because ordinary least squares regression assumes that all residuals are drawn from a population that has a constant variance . The basic point for the error term is said to be homoscedastic if the remedial process that we undertake for correcting this problem is to make some transformation so as to make the error variance homoscedastic. One of the advantages of the concept of assumptions of linear regression is that it helps you to make reasonable predictions. When you increase the number of variables by including the number of hours slept and engaged in social media, you have multiple variables.

Homoscedasticity is the fourth assumption in assumptions of linear regression. A scatter plot of residual values vs predicted values is a good way to check for homoscedasticity. It’s much like the Breusch-Pagan check, however the White take a look at allows the impartial variable to have a nonlinear and interactive impact on the error variance.

- We have seen the concept of linear regressions and the assumptions of linear regression one has to make to determine the value of the dependent variable.

- Several modifications of the White method of computing heteroscedasticity-consistent commonplace errors have been proposed as corrections with superior finite sample properties.

- One informal way of detecting heteroskedasticity is by creating a residual plot where you plot the least squares residuals against the explanatory variable or ˆy if it’s a multiple regression.

- If the p-value is greater than the significance value then consider that the failure to reject the null hypothesis i.e.

- While OLS is computationally feasible and can be easily used while doing any econometrics check, it is important to know the underlying assumptions of OLS regression.

In anoverfittingcondition, an incorrectly excessive worth of R-squared is obtained, even when the model really has a decreased capability to foretell. The error term is critical because it accounts for the variation in the dependent variable that the independent https://1investing.in/ variables do not explain. Therefore, the average value of the error term should be as close to zero as possible for the model to be unbiased. Predicting the amount of harvest depending on the rainfall is a simple example of linear regression in our lives.

What Is Homoscedasticity & Heteroscedasticity?

There is a linear relationship between the independent variable and the dependent variable . One is the predictor or the independent variable, whereas the other is the dependent variable, also known as the response. A linear regression aims to find a statistical relationship between the two variables. First, linear regression needs the relationship between the independent and dependent variables to be linear. It is also important to check for outliers since linear regression is sensitive to outlier effects.

In other words, one predictor variable can be used to predict the other. This creates redundant information, skewing the results in a regression model. Multivariate Normality is the third assumption in assumptions of linear regression. The linear regression analysis requires all variables to be multivariate normal. As sample sizes increase then the normality for the residuals is not needed.

This is as a result of a lack of expertise of OLS assumptions would result in its misuse and give incorrect outcomes for the econometrics check completed. These assumptions are extraordinarily important, and one can not simply neglect them. Having said that, many times these OLS assumptions might be violated. However, that should not stop you from conducting your econometric take a look at. Rather, when the assumption is violated, applying the right fixes and then operating the linear regression model should be the best way out for a dependable econometric test.

Assumptions of Simple Linear Regression: What will be the effect of the error terms not being homoscedastic in nature?

If these assumptions hold right, you get the best possible estimates. In statistics, the estimators producing the most unbiased estimates having the smallest of variances are termed as efficient. In this case, the assumptions of the classical linear regression model will hold good if you consider all the variables together.

If the connection between independent variables is powerful , it still causes problems in OLS estimators. The anticipated value of the imply of the error phrases of OLS regression must be zero given the values of independent variables. Heteroscedasticity-constant commonplace errors , while nonetheless biased, improve upon OLS estimates. HCSE is a constant estimator of ordinary errors in regression models with heteroscedasticity.

It becomes difficult for the model to estimate the relationship between each independant variable and the target variable independently because the features tend to change in unison. If the variance of the errors across the regression line varies much, the regression mannequin may be poorly defined. However, you possibly can nonetheless verify for autocorrelation by viewing the residual time series plot. That all other assumptions of the error term in the classical regression model except homoscedasticity are satisfied.

Little or No autocorrelation

3) The correlation coefficient’s numerical value will range from -1 to 1. If the variance is unequal for residual, across the residual line then the data is said to be heteroscedasticity. In this case, the residual can form bow-tie, arrow, or any non-symmetric shape.

The rule is such that one observation of the error term should not allow us to predict the next observation. B. Correlation coefficient does not change its magnitude under the change of origin and scale. It gives us the p-value and then the p-value is compared to the significance value(α) which is 0.05. If the p-value is greater than the significance value then consider that the failure to reject the null hypothesis i.e.

Advance your career in the field of marketing with Industry relevant free courses

For example, any change in the Centigrade value of the temperature will bring about a corresponding change in the Fahrenheit value. This assumption of the classical linear regression model states that independent values should not have a direct relationship amongst themselves. In other words “Linear Regression” is a method to predict the dependent variable based on values of independent variables . It can be used for the cases where we want to predict some continuous quantity.

There are no comments